Slide 1 - Lexical Analysis Overview

Lexical Analysis: Tokens, Lexemes, and Patterns

Understanding the First Phase of Compiler Design

---

Photo by Nicolas Arnold on Unsplash

Generated from prompt:

overview and Role of Lexical Analyzer, Tokens, Lexemes

This presentation introduces lexical analysis, the first phase of compiler design. It explains the role of the lexical analyzer, differences between lexemes and tokens, the analysis process with examples, and its position in the overall compilation流程

Lexical Analysis: Tokens, Lexemes, and Patterns

Understanding the First Phase of Compiler Design

---

Photo by Nicolas Arnold on Unsplash

---

Photo by Markus Spiske on Unsplash

1

Bridging source code and machine-executable structure

---

Photo by Sasun Bughdaryan on Unsplash

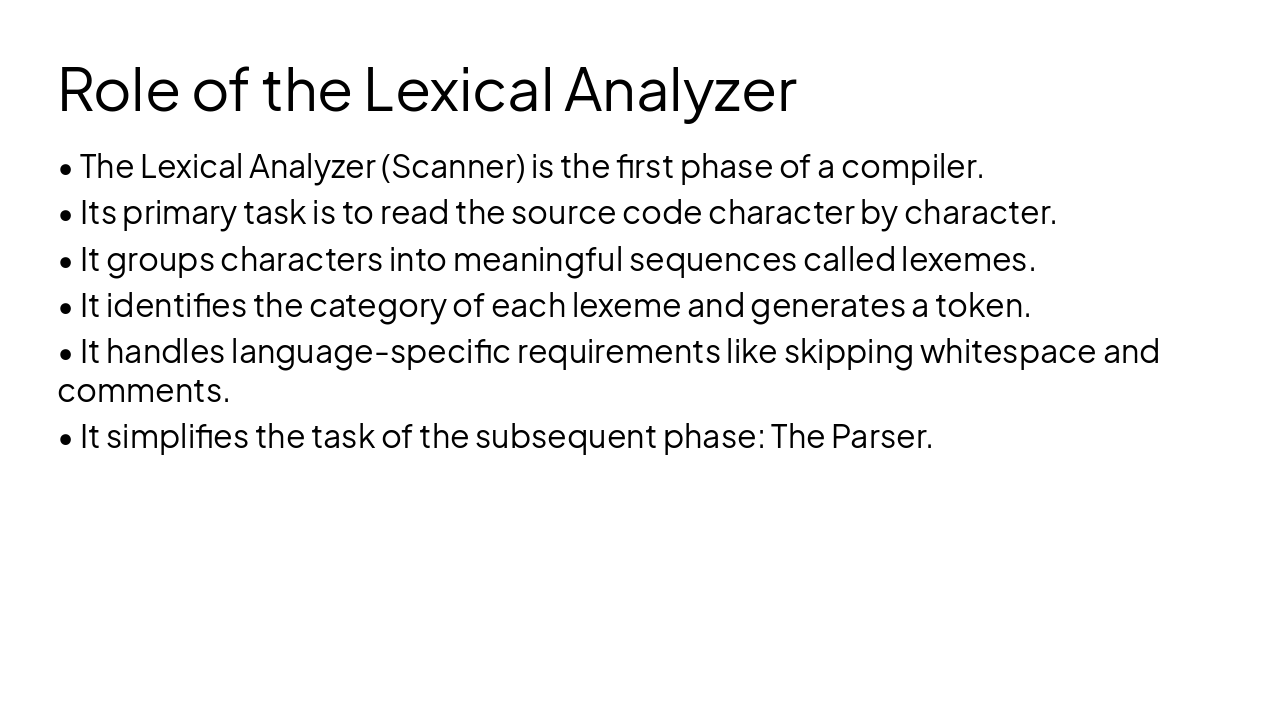

What is a Lexeme? A lexeme is the actual sequence of characters in the source program that matches a pattern for a token. It is the raw 'word' extracted from the code. Example: 'int', 'x', '10'.

What is a Token? A token is an abstract category (symbolic name) assigned to a lexeme by the scanner. It is a pair consisting of a token name and an optional attribute value. Example: 'KEYWORD', 'IDENTIFIER', 'NUMBER'.

Lexical analysis transforms raw source code into structured data, enabling efficient parsing.

Final Thoughts

---

Photo by Sergei Nikulin on Unsplash

Explore thousands of AI-generated presentations for inspiration

Generate professional presentations in seconds with Karaf's AI. Customize this presentation or start from scratch.